The Pixhawk is an high-performance autopilot system designed by the PX4 open-hardware project and manufactured by 3D Robotics that can be used with fixed wing aircraft, multi-rotor aircraft, cars, boats and any other autonomous vehicle. It is designed for everything from research, amateurs and industry.

It is a combination of the PX4FMU Autopilot / Flight Management Unit and the PX4IO Airplane/Rover Servo and I/O Module previously created by PX4.

The Pixhawk includes the following sensors:

- ST Micro L3GD20H 16 bit gyroscope.

- ST Micro LSM303D 14 bit accelerometer / magnetometer.

- MEAS MS5611 barometer.

It also allows the connection of a number of other useful external devices such as:

It is controlled by an app available on a number of different hardware platform; either Mission Planner (Windows) or APM Planner for (Windows, OS X, and Linux) and Droiplanner2 on Android.

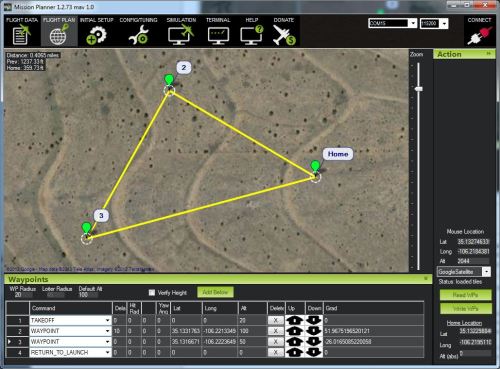

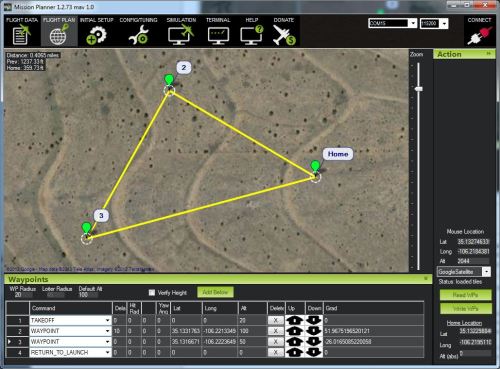

Aerial flight paths can easily be set in the software by clicking way points on a map of the area.

By selecting a polygon around the area the software can create a grid flight pattern to fly; the altitude, camera type and overlap of images can also be set which alters the amount of times the aerial vehicle flies across the area under study. This allows either an image mosaic to be created or a photogrammetry model.

The 3DR Radio Set allows wireless communication between the Pixhawk and an Android device using the DroidPlanner or Andropilot ground station app, while the inclusion of a bluetooth data link also allows an Android device with ground station apps and Bluetooth to connect to the Pixhawk. Both of these options also allow the use of the Follow Me mode in the software which allows the autopilot to follow the system that is running the app.

As it is an open hardware project the schematics can be downloaded.

The Pixhawk costs $199.99 in its basic form, $474.97 with all of the standard available options.

Potential

The Pixhawk has great potential for the control of any type of autonomous vehicle, whether flying mapping missions, flying a per-determined course to record things, or in “follow-me” mode recording site tours. The fact that it is part of an open-source community means that it is continually in development with input from the people who are using the technology.

It has become so popular in the industry that it is the technology used in a number of Kickstarter UAV projects including the AirDog and HEXO+ as well as 3D Robotics’s IRIS+ quadcopter.

The community provides extensive instructions for the system and its uses.